Abstract

The ability to recover tissue deformation from visual features is fundamental for many robotic surgery applications. This has been a long-standing research topic in computer vision, however, is still unsolved due to complex dynamics of soft tissues when being manipulated by surgical instruments. The ambiguous pixel correspondence caused by homogeneous texture makes achieving dense and accurate tissue tracking even more challenging. In this paper, we propose a novel self-supervised framework to recover tissue deformations from stereo surgical videos. Our approach integrates semantics, cross-frame motion flow, and long-range temporal dependencies to enable the recovered deformations to represent actual tissue dynamics. Moreover, we incorporate diffeomorphic mapping to regularize the warping field to be physically realistic. To comprehensively evaluate our method, we collected stereo surgical video clips containing three types of tissue manipulation (i.e., pushing, dissection and retraction) from two different types of surgeries (i.e., hemicolectomy and mesorectal excision). Our method has achieved impressive results in capturing deformation in 3D mesh, and generalized well across manipulations and surgeries. It also outperforms current state-of-the-art methods on non-rigid registration and optical flow estimation. To the best of our knowledge, this is the first work on self-supervised learning for dense tissue deformation modeling from stereo surgical videos.

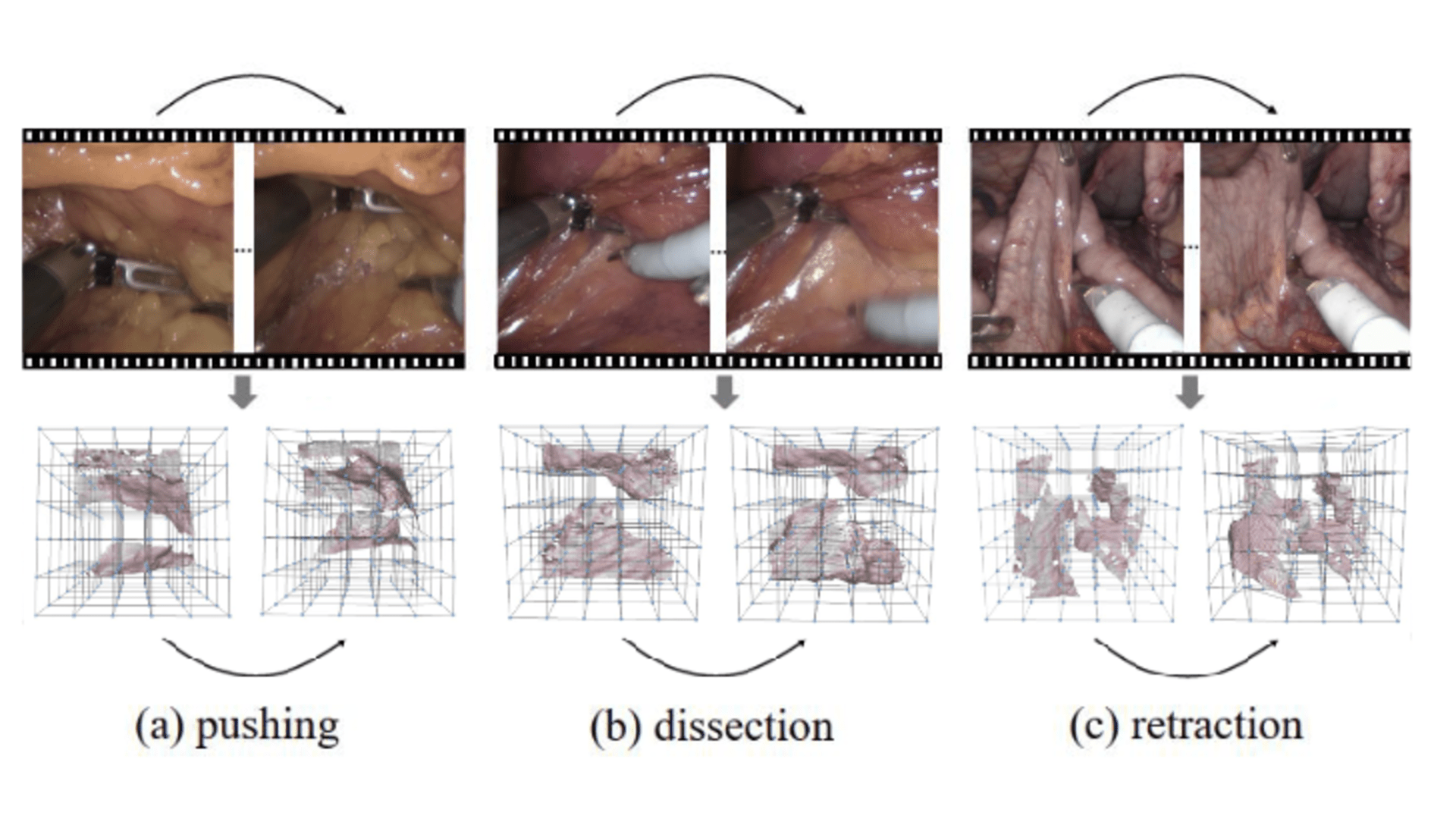

Illustration of the soft tissue deformation recovery task with examples of different manipulations. Our method represents the learned deformation field in 3D.

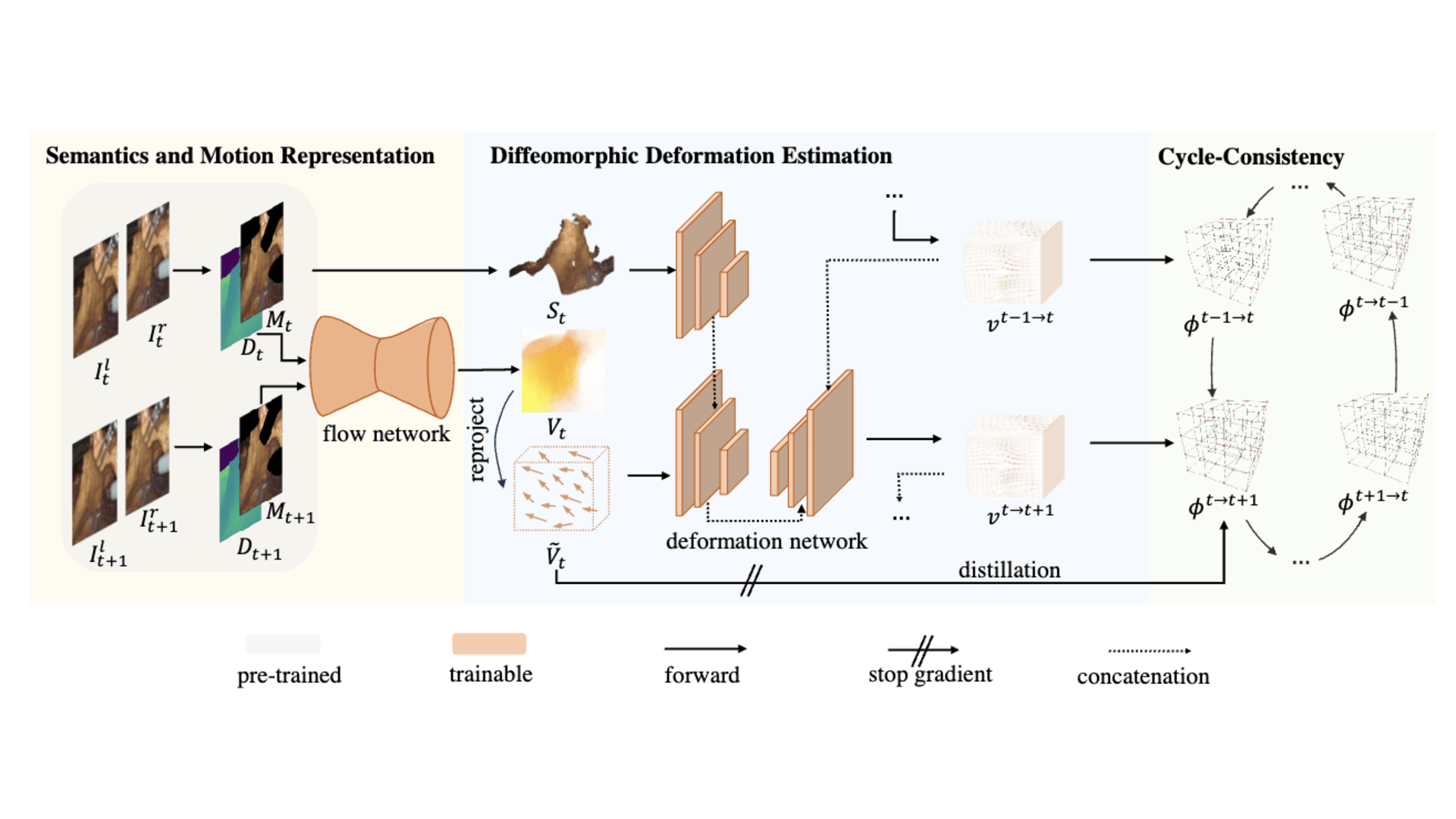

The pipeline of our proposed soft tissue deformation recovery. Given two adjacent frames It and It+1, we first estimate their depth Dt/Dt+1 and the tool masks Mt/Mt+1 so as to establish the semantic representation St and flow representation Vt. A deformation network aggregates these representations together with temporal context and predicts a velocity field vt->t+1, which is later integrated into deformation field Φt->t+1. Cyclic consistency is utilized as self-supervision.

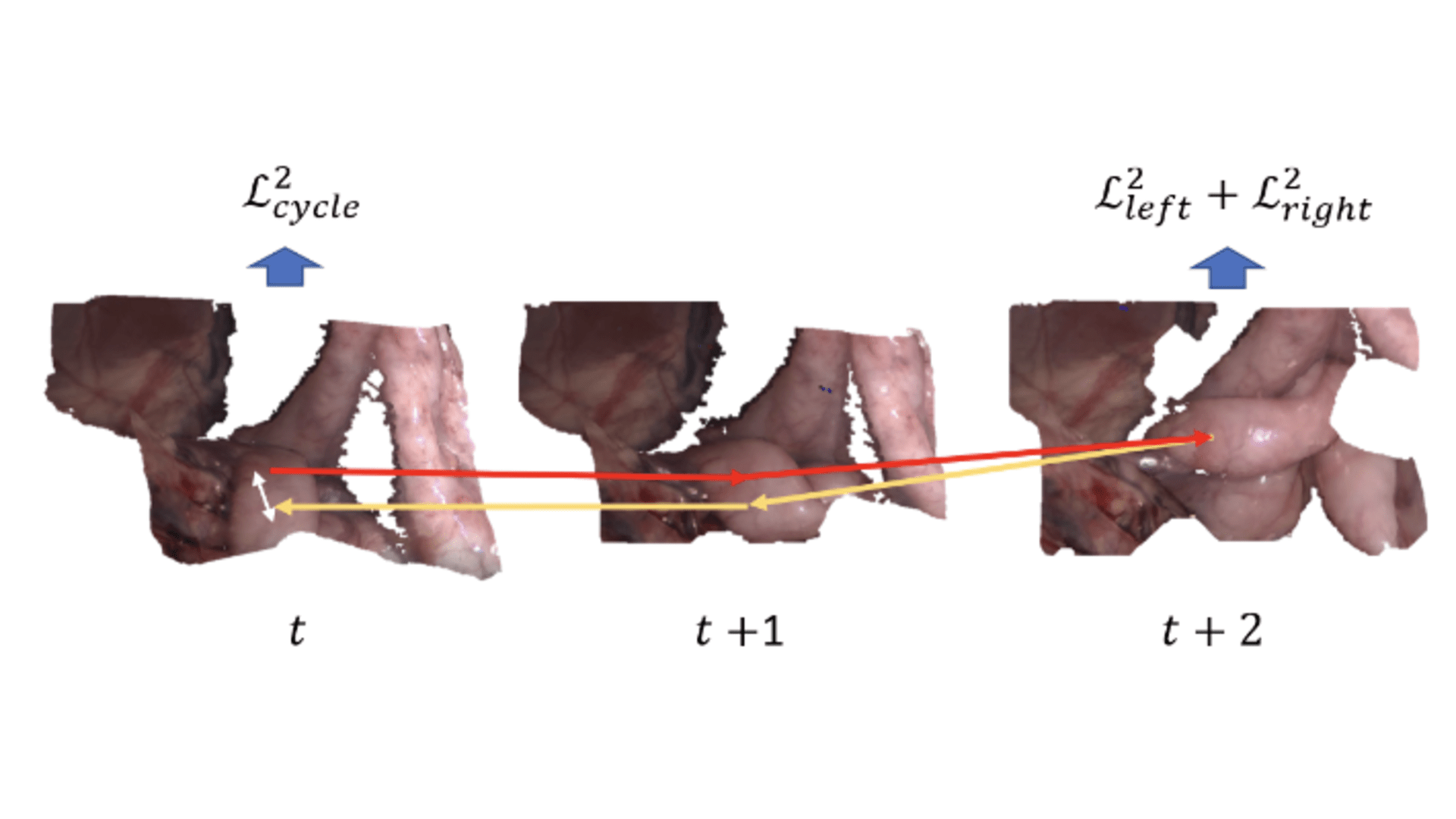

Cyclic-consistency enforces the points to re-arrive at their initial position after an entire forward-backward cycle.

Results

Quantitative evaluation of our method compared with existing methods. We train the model with all training data and report the soft tissue manipulation-level metric on the test set. The best number for each category is highlighted in bold.

| Models | %|JΦ| <= 0 ↓ | l1-norm ↓ | PSNR ↑ | SSIM (%) ↑ | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| push | dissect | retract | total | push | dissect | retract | total | push | dissect | retract | total | push | dissect | retract | total | |

| NICP | 3.53 | 6.58 | 3.87 | 4.65 | 22.78 | 24.04 | 26.34 | 24.46 | 17.85 | 17.92 | 16.90 | 17.53 | 64.92 | 63.92 | 58.34 | 62.25 |

| CPD | 8.14 | 7.21 | 7.44 | 7.56 | 22.31 | 21.74 | 24.21 | 22.82 | 17.84 | 18.42 | 17.54 | 17.89 | 61.21 | 63.88 | 58.46 | 60.90 |

| SIFT | 0.15 | 0.14 | 0.41 | 0.24 | 21.03 | 20.85 | 25.31 | 22.53 | 18.78 | 18.92 | 17.65 | 18.41 | 76.46 | 76.64 | 71.47 | 74.73 |

| Harris-Laplace | 0.15 | 0.14 | 0.41 | 0.26 | 21.02 | 20.94 | 25.23 | 22.53 | 18.79 | 18.87 | 17.67 | 18.40 | 76.46 | 76.59 | 71.58 | 74.64 |

| RAFT | 3.64 | 3.86 | 3.37 | 3.61 | 13.64 | 12.00 | 13.61 | 12.89 | 21.92 | 21.60 | 22.19 | 21.90 | 84.29 | 85.59 | 82.19 | 83.97 |

| UFlow | 3.63 | 3.83 | 3.35 | 3.59 | 12.98 | 12.03 | 13.69 | 12.97 | 21.94 | 22.01 | 22.18 | 22.14 | 84.52 | 85.82 | 82.23 | 84.13 |

| Ours (+RAFT) | 0.01 | 0.01 | 0.03 | 0.02 | 13.03 | 11.94 | 13.43 | 12.80 | 22.02 | 23.09 | 21.73 | 22.27 | 84.61 | 85.97 | 82.82 | 84.42 |

| Ours (+UFlow) | 0.01 | 0.01 | 0.03 | 0.02 | 12.86 | 12.00 | 13.56 | 12.83 | 22.11 | 23.12 | 21.67 | 22.28 | 84.70 | 86.03 | 82.69 | 84.43 |

BibTeX

@article{gong2024self,

title={Self-Supervised Cyclic Diffeomorphic Mapping for Soft Tissue Deformation Recovery in Robotic Surgery Scenes},

author={Gong, Shizhan and Long, Yonghao and Chen, Kai and Liu, Jiaqi and Xiao, Yuliang and Cheng, Alexis and Wang, Zerui and Dou, Qi},

journal={IEEE transactions on medical imaging},

volume={43},

number={12},

pages={4356--4367},

year={2024}

}